To imagine the “tech home of the future,” one needs only to look back at the dynamic past of IT technology. Although few of us were old enough to have been conscious of the event, 1955 marked the commercial arrival of the hard disk drive storage system to the world. In that year, IBM introduced the 350 Disk File as a companion to its 305 computer. This drive had 50 24-inch platters, with a total capacity of five million characters.

It was nearly two decades later when IBM introduced the 3340 “Winchester” disk system, showcasing a then massive 30 Mb capacity and an astonishingly fast 30 millisecond access time. This unit was the first to use a sealed head/disk assembly, which is the standard found in almost all disk drives today.

For the first quarter century of their existence hard disks were huge, mechanical monsters, with surprisingly fragile components, and were therefore relegated to the careful controlled environments of data centers. (Remember those pictures of eight-foot racks of hardware tended by multiple technicians in white lab coats?) Because of this, hard disks were not something anyone outside the world of big, mainframe-style computing even thought of. In fact, in its factory configuration the original IBM PC (IBM 5150) of the early 1980s was not even equipped with a hard drive.

Nearly a decade elapsed from that first pseudo-accessible HD product in 1973 until 1980-81 when the then little known Seagate Technology introduced the first compact 5.25-inch hard drive, called the ST-506, which had a capacity of five megabytes. That product, or its IBM equivalent, appeared in the first real PCs in 1983. The IBMPC AT cost nearly $6,000 and contained an Intel 80286 processor; a processing speed of 6 MHz at its introduction, then later 8 MHz, RAM of 256K-16MB; ROM of 64K; 5.25-inch high-density floppy drive (1.2 MB) for storage and an internal 20 Mb hard drive.

Just for reference lets remember our prefixes:

Kilo (kb) = one thousand bytes

Mega (Mb) = one million bytes

Giga (Gb) = one billion bytes

Tera (Tb) = one trillion bytes

Just think about trying to use something with those performance capabilities in todays residential applications. It could probably deal with the environmental control and not much moreremember that it ran on DOS, not Windows. Today, 5 Mb would equal about one four-minute MP3 file streaming at 192Kb/second. Thats why you can get thousands of songs on your 30 Gb MP3 player.

The explosion of HD capacity in the last two decades resembles Moores Law on steroids. With early personal computers, a drive with a 20-megabyte capacity was considered extremely large. By the mid- to late-1990s, hard drives with capacities of 1 gigabyte and greater became available. At the 2003 CES, the big noise was from 250 Gb drives approaching $1/Gb in cost. As of early 2005, the “smallest” desktop hard disk in production has a capacity of 40 gigabytes, while the largest-capacity drives approach a half a terabyte, and are expected to exceed that mark by years end. Today, you can carry in your pocket a device not much larger than a 50-cent piece that will hold no less than 5 Gb, or 1,000 times as much as that early 5 Mb drive.

The appearance in the late 1990s of high-speed external interfaces such as USB and IEEE 1394 (FireWire), plus the migration of commercial ethernet-type network capabilities into the residential markets has made external disk systems extremely popular. This has been especially true for users who want to move large amounts of data between two or more locations, such as between a PVR and a display device.

Why is this all important the residential systems integrator? Because, 21st Century media and entertainment requirements have essentially no upper limit on the amount of storage and streaming capability that they will require.

Lets look at what is and will be required. Uncompressed HD can require 1.5 Gbps data rates. This will probably mean using some kind of fiber-optic line with a capacity higher than the required rate, such as 2.4-Gb/sec., to move it around. Todays MPEG 2 compressed HD streams operate between 12 and 22 Mbps, and DVD-quality signals require between 5 and 10 Mb/sec capability. Some newer schemes propose to compress the video to around half of the 12 Mb/sec to 18 Mb/sec currently needed using MPEG-2. As a reference here are some general parameters for required transmission speeds for standard HDTV signals:

MPEG-2 compression: 20 Mb/sec

MPEG-4 compression: 10 Mb/sec

Windows MP-9: 7.5 Mb/sec

These “lower” rate signals can be handled by coax or copper for the distances usually found in residential applications. To be able to deliver signals at these immense data densities, however, you must have a storage/playback system capable of producing or originating these streams, especially to serve multiple users in multiple locations simultaneously.

It makes a great deal of sense to consider centralized mass storage for this purpose. To achieve sufficient capacity and permit multiple high-data-rate streams to exist means using high-speed, high-density hard discs. While stand-alone devices like PVRs are fine for NTSC or even limited HD capability, once you start looking at storing multiple two-hour HD movies and hundreds of CDs, all of the kids digital pictures, etc., you can rapidly reach 500 Gb of consumed space.

The solution to this exponential data increase is to create multi-terabit-sized central media storage. Using the 400 to 500 Gb drives launched at the 2005 CES by Hitachi Global Storage, Seagate and others it would take less than a 2RU panel to contain all four drives plus the needed hardware and interfaces.

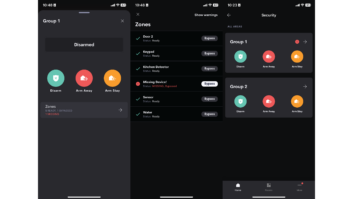

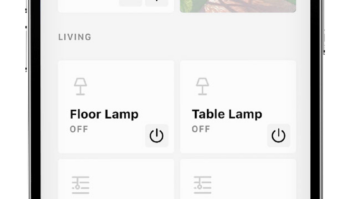

But how do you manage this massive data pile? New GUI and software solutions from companies like Sonic Solutions, AMD and others can provide the kind of “find it, play it and move it” flexibility with no confusing media center-style interface, and without the need for a complete PC chassis to run the system. While no actual product has been formally shown, its not very far away. It would be well worth your time to investigate what these solutions providers are offering today and how you can get a head start on the competition by creating customized capabilities for your customers. Costs are not excessive, and hardware reliability is far better than even three or four years ago.

Lets presume that in a few years, customers will routinely accumulate 2.5 terabytes of “digital stuff.” That is equivalent to 2,500 gigabytes, or 2.5 million megabytes, which is enough data to easily fill more than 30 standard PC-style 80Gb hard drives. To put that amount of data into some realistic framework, the 2003 World Book Encyclopedia ships on four CD-ROMs holding the standard 650 Mb each (slightly more than 2.5Gb total). You would need to buy 1,000 set of the World Book to reach 2.5 terabytes.

Now if that customer tried to send his data to his brother, over a 56K modem it would take 11-plus years. Over a 1.5 Mb DSL or a T1 line it would take 147-plus days. Over a 10 Tb/sec DWDM (Dense Wave Division Multiplexing) ultra-high-speed fiber optic connection, it would take two to three seconds

As we face an ever-increasingly dense data world, speed and storage capacity will be the core issues needing solutions. Prepare now, and youre customers will thank you for it later.

Frederick J. Ampel ([email protected]) is president of Technology Visions in Overland Park, Kansas.